Reporting is essential, but rigorous analysis and data-driven operations is where the ROI from data accrues and compounds.

I can think of at least 3 types of analysis that organizations execute:

- Responsive: this is the most common analysis pattern in organizations, and can take on a few different shapes.

- in response to metric movements in the business, identifying which segments are driving the change, and isolating the effect of mix vs behavior changes.

- analyzing the impact of specific interventions or experiments across a set of metrics

- reacting to external pressures like the business conditions today, and identifying highly profitable segments vs others

- Exploratory: as the name implies, this involves exploring datasets to derive insights, originate or validate hypotheses - to ultimately find opportunities to advance the business.

Common examples include

- inspecting user behavior at a D2C brand to identify product improvements

- analyzing a process workflow like an order fulfillment at a logistics company or a series of tasks at a managed marketplace to isolate segments that experience friction or delays

- classifying high and low retention users in any business model, and attributes that could explain the difference

- Planning: once you analyze and develop a hypothesis, planning-type analysis help “size” opportunities, make comparisons etc.

As an example, if you identify low retention segments that could be treated with an intervention, it is worth asking what the impact of such an intervention would be. Further, is it better to alter the mix of segments or the retention rates itself? Or improve acquisition efforts earlier in the funnel versus retention later in the funnel?

Responsive analysis is the most commonly executed as it’s a natural extension of business reporting and metrics monitoring.

But we need to do all three types consistently - Responsive, Exploratory, and Planning - get the REPs in so to speak.

What’s holding us back is that these are too slow, manual, technical analyst-dependent and error-prone. Data is nuanced but we don’t need to accept this reality as normal - that these operations will always be manual and bespoke (”can someone run this analysis for me?”) or takes time (”she’ll get to it next month”), and even accept that many such requests will never be completed.

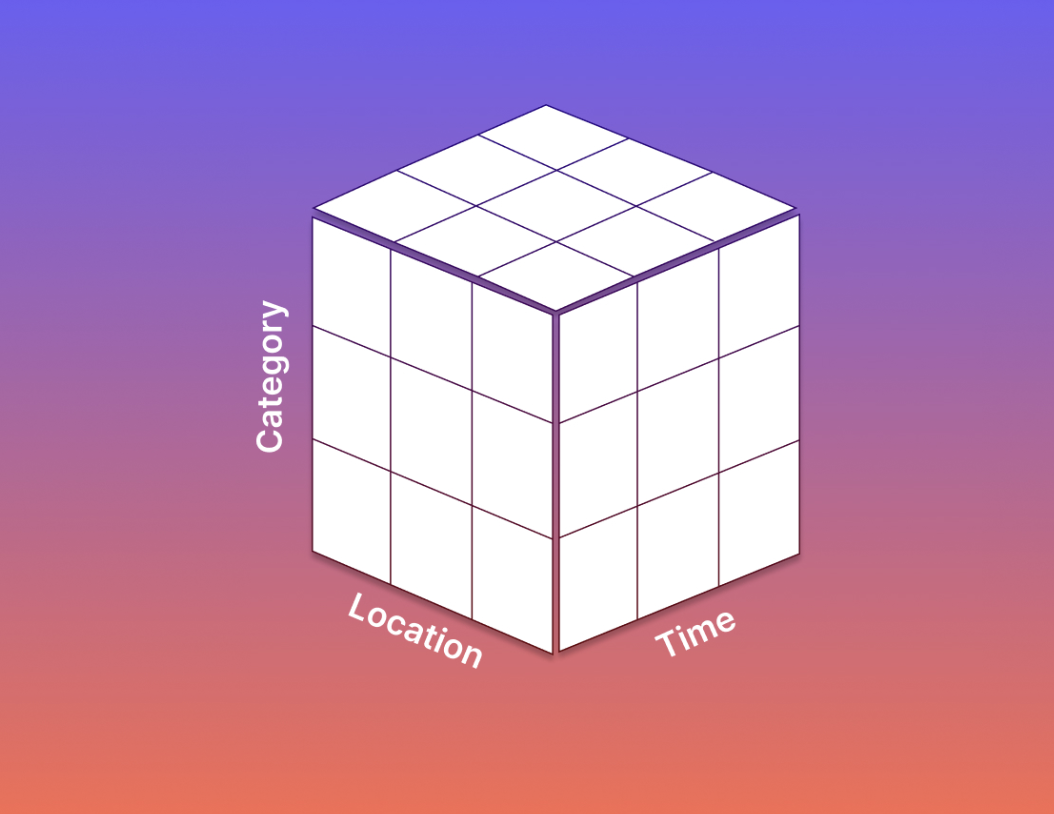

Tools can change to unlock superior behavior. I believe there is enough history of data modeling now to standardize the business concepts - the base concepts of entities, metrics, attributes to newer concepts like a business process (e.g: a funnel) or the notions of segments, experiments, and scenarios.

And if these can be mapped by technical users, the wider data and business teams can execute high value generating analysis with significantly lower friction - 10x-ing the REPs.

Excited for this future!